AI Music Detector Uncovered How They Spot Fake Tracks

- Nov 28, 2025

- 13 min read

An AI music detector is exactly what it sounds like: a specialized tool built to sniff out music that was either created or messed with by artificial intelligence. Think of it as a digital watchdog for the music industry, designed to verify a track's authenticity and protect artists in a world where the line between human and machine is getting incredibly blurry.

The New Frontier of Music and the Need for a Digital Watchdog

Imagine queuing up what you think is a powerful new track from your favorite artist, only to discover it’s a deepfake cooked up by a machine. This isn't science fiction anymore. It’s a real problem challenging artists, labels, and fans every single day. We're standing on the edge of a new frontier where AI can compose, produce, and even "perform" with a startlingly human touch.

This explosion in technology opens up some exciting creative doors, but it also kicks open the door to some serious risks. Unauthorized voice cloning, blatant copyright infringement, and streaming platforms getting flooded with synthetic tracks aren't just distant threats—they're active problems. These issues can dilute royalty pools, mislead listeners, and completely undermine an artist's brand and hard work.

Why Human Ears Can No Longer Tell the Difference

Here’s the hard truth: AI music has gotten so sophisticated that our own ears can't keep up. That's not just a gut feeling; the data backs it up.

A recent study was a real eye-opener, revealing that AI successfully fooled an astonishing 97% of listeners. That makes it nearly impossible for the average person to tell the difference between a human-made song and one generated by AI. You can dig into the full report from the Deezer and Ipsos survey about AI music.

This is precisely why an AI music detector has become a non-negotiable tool. It offers a technical solution to a problem our own senses can no longer reliably solve. This tech acts as a crucial verification layer, bringing back a much-needed measure of trust and control.

For creators, this isn't just about catching fakes. It's about protecting their identity, securing their income, and maintaining the integrity of their art in a rapidly changing industry.

Without a reliable way to identify synthetic media, the entire music ecosystem faces a crisis of authenticity. This guide will break down how these digital watchdogs actually work, where they’re being used, and how you can leverage them to safeguard your career.

How an AI Music Detector Actually Works

So, how does a machine actually spot another machine's work? An AI music detector isn’t using some single magic trick. It's more like a team of highly specialized investigators, each using a different method to figure out where a track really came from.

This multi-pronged approach is non-negotiable because AI-generated music is getting scarily good at sounding human. By combining several different analytical techniques, these systems can pick up on subtle clues that would otherwise fly right under the radar. It's this layering of methods that makes the detection process robust and accurate. Each "investigator" is looking for a unique kind of evidence.

Audio Forensic Analysis

First up on the scene is the audio forensics expert. Think of this process like putting a soundwave under a microscope. You're looking for tiny digital fingerprints that are totally invisible to the naked ear. Human musicians have natural, subtle imperfections—in their timing, their pitch, their rhythm. It's part of what makes music feel alive.

AI models, on the other hand, often produce sounds that are just too perfect. Audio forensics looks for these dead giveaways: unnaturally precise rhythms that never waver, repetitive patterns that lack any human touch, or specific frequency artifacts left behind by the generation algorithm itself. These are the sonic breadcrumbs that betray a non-human creator.

Provenance and Metadata Scrutiny

Next, the provenance analyst steps in. This expert is all about investigating a song's digital passport—its metadata and creation history. Every digital audio file carries a story within its data, like the software it was made with, encoding dates, and artist info.

An AI music detector cross-references this data, sniffing out inconsistencies. For example, if a track claims to be a lost recording from the 1970s but its metadata shows it was created with a 2024 AI model, that's a massive red flag.

This method is all about verifying the story a track tells about itself. Does its digital history actually line up with its claims? It's a critical step in separating legitimate recordings from fraudulent uploads designed to cash in on older styles or mimic specific artists.

To give you a clearer picture, here's a breakdown of how these different detection methods stack up against each other. Each one brings something unique to the table.

Comparing AI Music Detection Techniques

This table breaks down the primary methods used by AI music detectors, outlining how each one works, its main strengths, and potential weaknesses.

Detection Technique | How It Works | Primary Strength | Potential Weakness |

|---|---|---|---|

Audio Forensic Analysis | Scans the audio waveform for unnatural perfection, repetition, or artifacts left by AI models. | Can identify AI tracks even without metadata, based purely on the sound itself. | Advanced AI models are learning to mimic human-like imperfections, making detection harder. |

Provenance & Metadata | Cross-references a track's embedded data (creation date, software used) against its claims. | Excellent for spotting obvious fakes and fraudulent uploads with conflicting histories. | Metadata can be intentionally stripped or manipulated, leaving no trail to follow. |

Digital Watermarking | Searches for an inaudible, invisible "Made with AI" signature embedded in the audio file. | Provides definitive, undeniable proof of a track's AI origin when present. | Not all AI tools use watermarking, making it an unreliable method on its own. |

Machine Learning (ML) | An AI trained on vast datasets of human and AI music to recognize subtle sonic patterns. | Incredibly powerful at spotting complex, nuanced differences that other methods miss. | Can produce false positives and requires constant retraining to keep up with new AI models. |

As you can see, relying on just one of these techniques would leave huge blind spots. That's why the best detection systems use a combination of them to get the most accurate result possible.

Digital Watermarking Verification

Some detection methods are way more straightforward, thanks to digital watermarking. You can think of this as an invisible "Made with AI" tag embedded directly into the audio file. This watermark is completely inaudible to us but is easily picked up by a specialized detector.

While it's not a universal standard yet, it's becoming a more common practice for responsible AI platforms looking to build in transparency. When a detector finds one of these digital signatures, it’s game over—you have definitive proof of the track's synthetic origins.

Machine Learning Classification

Finally, we have the most powerful investigator of all: the machine learning (ML) classifier. This is quite literally an AI trained to catch other AIs. These models are fed millions of songs—some human-made, some AI-generated—and over time, they learn to recognize the subtle, almost imperceptible differences between the two.

It's a lot like an art expert who can spot a forgery by recognizing the unique brushstrokes of a master painter. An ML classifier learns the "sonic brushstrokes" of human creativity versus algorithmic generation. It identifies incredibly complex patterns in harmony, melody, and texture that scream authenticity—or a lack thereof. This ability to spot suspicious activity at scale is crucial, much like the systems in our bot detection tools for Spotify that analyze patterns to keep the platform clean. This advanced pattern recognition makes ML classifiers the cornerstone of modern AI music detection.

Real-World Applications for AI Music Detectors

An AI music detector is much more than some futuristic idea; it's a very real tool changing how the music industry works, right now. From solo artists trying to protect their work to massive streaming platforms, this tech is becoming a critical line of defense and a new standard for quality control.

For artists and songwriters, these detectors are on the front lines, safeguarding their most valuable assets: their intellectual property. Think of them as automated guardians, constantly scanning for unauthorized uses of a melody, a beat, or even an artist's voice. This is absolutely essential for stopping fraudulent uploads and making sure royalties actually get paid to the right creators.

Protecting Creative Integrity

The most obvious use is protecting an artist’s unique sound and identity. In a world where voice cloning technology is getting easier to use, an AI music detector is vital for flagging unauthorized deepfakes that could wreck an artist’s reputation or divert their income.

Labels and A&R teams are also leaning on this tech as a filter for authenticity. When you're sifting through thousands of demos, a detector helps quickly verify that a submission is original work, not just a repurposed or AI-generated track. This keeps their catalog clean and ensures they’re investing in genuine human talent.

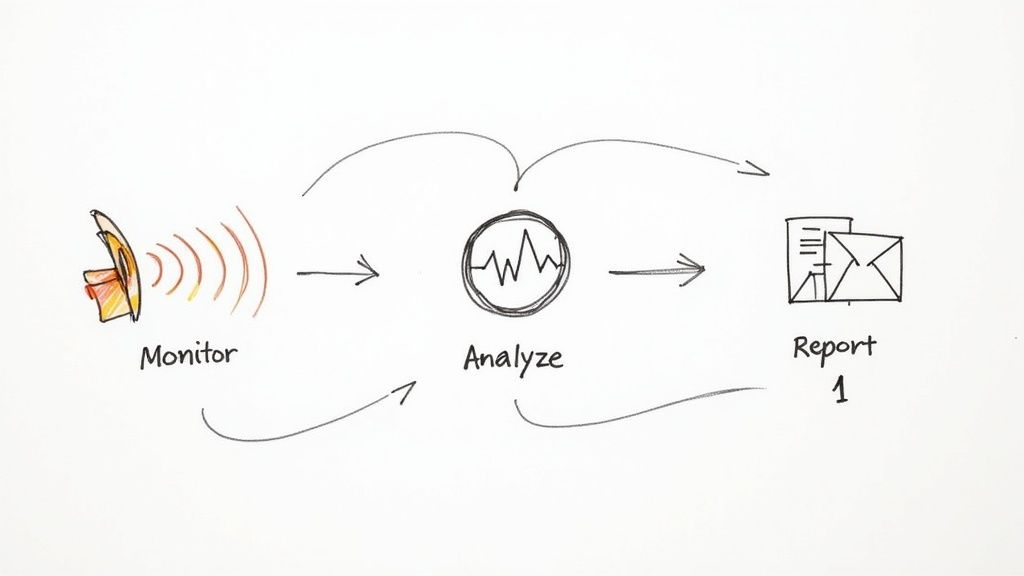

This diagram breaks down the core pieces that make a modern AI music detector system tick.

As you can see, a detector isn't just one thing. It combines multiple methods—like audio forensics, checking a track's origin (provenance), and watermarking—to create a much more reliable verification process.

Maintaining Ecosystem Health

Streaming platforms are probably the biggest users of AI detection, building it right into their content moderation systems. It tackles several key jobs for them:

Fighting Streaming Fraud: Detectors are great at identifying and flagging bot-like or spammy AI-generated tracks that get uploaded in bulk to game the system and steal royalties.

Enforcing Content Policies: Platforms can automatically enforce their own rules against fake or deceptive synthetic content, taking down tracks that impersonate artists without permission.

Powering Transparency: This is the tech that will power new "Made with AI" labels, giving listeners clear and honest info about how a song was actually created.

By sniffing out and filtering AI-generated spam, platforms protect the royalty pool. This makes sure money flows to legitimate artists who are playing by the rules, which is vital for keeping the whole ecosystem fair and trustworthy.

At the end of the day, an AI music detector isn’t just about catching fakes. It's about building a more transparent, secure, and fair playground for everyone creating and listening to music. It provides the checks and balances we need to make sure human creativity continues to be supported.

The Limitations and Challenges of Detection Technology

While an AI music detector is a powerful concept, the reality is far from a perfect solution. The technology is caught in a relentless cat-and-mouse game. As generative AI models get better at creating more nuanced, human-sounding music, detection algorithms have to constantly play catch-up.

This creates an arms race where the methods that work today might be totally useless tomorrow. The second a detector learns to spot a specific digital flaw, the next generation of AI music tools is already learning how to erase it. This constant evolution means there’s no permanent "fix" for sniffing out AI content.

The Problem of False Positives

One of the biggest headaches with this technology is false positives. This is what happens when a detector mistakenly flags heavily processed or super-polished human-made music as being AI-generated. For a real, working artist, this is a nightmare scenario.

Just think about an electronic music producer who uses complex software, auto-tune, and precise quantization to get that perfect sound. Their clean, flawless-sounding track could easily get flagged by an AI detector that’s been trained to look for exactly that kind of unnatural perfection.

A false positive can get a track unfairly penalized, kicked off playlists, or even removed from streaming services entirely. This ends up hurting the very artists these tools are supposed to protect, adding a new layer of stress for creators who just want to use modern production techniques.

Artists and labels need to understand this risk. It shows why we need a more careful approach, where a detector’s flag is just the start of an investigation, not the final word. This is especially true when you're looking into issues like unmasking Spotify fake streams and protecting your music, where getting the facts right is absolutely critical.

The Challenge of Hybrid Music

Making things even trickier is the rise of hybrid tracks—songs that seamlessly blend human and AI elements. An artist might use an AI tool to spit out a cool drum loop or a chord progression, then build the entire song around it with live guitars and their own vocals.

How is a detector supposed to classify a track like that?

Is it human or AI?

Where do you even draw the line?

These hybrid creations completely blur the lines for any detection system, making a simple "yes" or "no" answer feel impossible. The music isn't 100% human, but it isn't 100% machine-made, either. As this collaborative style becomes more common, detectors will have to move beyond binary labels. Maybe they'll need to show the percentage of AI contribution instead of just making a black-and-white call.

Why Detection Tools Are Suddenly Everywhere

The sudden push for a reliable AI music detector isn’t happening in a vacuum. It’s a direct answer to a market that is absolutely exploding with cash and new content. The sheer amount of money and AI-generated tracks flooding the system has created a desperate need for some technological guardrails.

This isn't about a few artists messing around with new tech. We're talking about a seismic economic shift. The generative AI music market is on a trajectory to blast off from $558.4 million in 2024 to a staggering $7.41 trillion by 2035. This rocket ship is being fueled by producers jumping on board (36.8% adoption) and a huge migration to cloud-based creation tools, which now dominate 71.4% of the market. You can dig into more of the mind-boggling numbers in this research on AI music industry statistics.

A Necessary Reaction to Market Pressure

This tidal wave of AI content puts immense strain on the entire music ecosystem. With billions of dollars flying around, the motivation for bad actors to game streaming platforms, steal copyrights, and clone voices has never been stronger. The market's growth isn't just a trend; it's a force that's prying open new vulnerabilities.

And that's exactly where the demand for detection tools comes from. It’s a classic story of new technology needing a counter-punch.

When a new technology opens the door for misuse at a massive scale, a corresponding "trust and safety" technology has to rise up to meet it. An AI music detector is the music industry's immune system for this new reality.

Key players are already building these defenses. Companies like Pex have developed seriously sophisticated tech to sniff out unauthorized voice clones, tackling one of the most personal and creepy forms of infringement head-on.

The Research Fueling the Tools

At the same time, specialized research hubs are pushing the envelope on what's even possible. Places like IRCAM, a French institute dedicated to the science of music and sound, are on the front lines developing advanced AI music detector systems. Their work isn't just for academic papers; it’s the foundational science that ends up powering the commercial tools that artists and labels actually use.

These solutions, coming from both private companies and research labs, aren't just nice-to-have features. They are a direct and vital response to the powerful economic forces reshaping how music gets made, shared, and paid for. Without them, the integrity of the whole market would be on shaky ground.

A Practical Workflow for Protecting Your Music

Knowing the risks of AI-generated music is one thing, but actually defending your work requires a clear game plan. Let's break down the process into three manageable stages, turning abstract threats into a concrete defense strategy you can use today.

Your first line of defense is proactive monitoring. This means consistently scanning streaming platforms for unauthorized uses of your sound, voice, or melodies. This isn't about spending hours manually searching; it's about using specialized tools built for this exact purpose to keep an eye on things for you.

Analysis and Verification

Once a tool flags a potential issue, the next step is to dig in and verify it. This is where you put on your detective hat to confirm if the activity is truly sketchy. For example, using a playlist analysis tool helps you spot the weird anomalies that often pop up around fake tracks, like bizarre streaming patterns or placement on a bunch of low-quality, botted playlists.

Identifying these red flags early is crucial. If a track mimicking your style lands on a network of fraudulent playlists, it can rack up fake streams and siphon off royalties that should have gone to real artists. If you find yourself in that situation, we've got a guide on how to get out of a botted playlist and clean up your profile.

Reporting and Takedown

After you've confirmed an infringement, the final stage is getting it taken down. Every major streaming platform has a specific process for submitting claims of copyright infringement or unauthorized use of your identity. Getting familiar with these systems is key to getting fraudulent content removed quickly and without a headache.

This three-step process—monitor, analyze, report—creates a continuous feedback loop. It empowers you to not just react to problems but to actively manage and protect your digital presence and your music.

The good news is that the industry is building better systems to help. In response to these challenges, dedicated tools like voice clone detectors and more advanced AI music detectors are starting to emerge. Platforms are also adding more layers of verification, from scanning new uploads for AI similarities to introducing "Made with AI" labels for transparency. It's a group effort, and your personal workflow is a critical piece of the puzzle in strengthening the ecosystem for all creators.

Got Questions? We've Got Answers.

Diving into the world of AI-generated music can feel a little confusing. Let's clear up some of the most common questions artists and music pros have about using an AI music detector.

Can AI Detectors Pinpoint Exactly Which AI Model Made a Song?

Yes, some of the more sophisticated systems absolutely can. While many detectors give you a basic "human vs. machine" verdict, the really advanced tools are trained to spot the unique sonic fingerprints left behind by specific AI music generators.

Think of it like a detective who doesn't just know a banknote is fake, but can identify the exact printing press it came from. This is a huge deal for tracing the source of unauthorized tracks or deepfakes.

Is It Actually Illegal to Upload AI-Generated Music?

This is where it gets tricky—it all depends on the situation. If you create a completely original track using AI tools and own all the rights, you're generally in the clear on platforms that allow it.

But you cross a serious legal line when you infringe on someone else's copyright.

That includes:

Using an artist's voice without their explicit permission (a violation of their right of publicity).

Copying a protected melody or a signature instrumental hook.

Passing off an AI track as the work of a human artist, especially a famous one.

Streaming platforms are scrambling to update their policies to handle these issues, so it's on you to stay current with their rules.

The real dividing line is between original creation and outright infringement. An AI music detector is one of the key tools platforms use to patrol that border, flagging content that "borrows" too heavily from protected works or steals an artist's identity.

How Can I Stop AI Companies from Training Models on My Music?

Being proactive is your best defense here. A new wave of services allows artists to add invisible audio watermarks or "data poisons" to their tracks.

These additions are completely inaudible to a listener, but they're designed to scramble and corrupt the training process if an AI company scrapes your music without permission. It's also smart to regularly review the terms of service for your distributor and streaming platforms to see exactly how they can use your music and data.

Ready to protect your music and get a clear view of where you stand in the market? artist.tools provides a full suite of analytics, including our crucial playlist monitoring and bot detection features, to help you safeguard your career. Take control of your data by visiting artist.tools today.

Comments